A while ago I got stuck in and actually read the paper she actually wrote. "Sketch of the Analytical Engine invented by Charles Babbage... with notes by the translator. Translated by Ada Lovelace"

In fact, she translated into English a paper by an Italian engineer, Luigi Menabrea, and clearly felt that his description did not go far enough, because she added her own notes at the end, which amount to more detail than the original translation.

The original paper by Menabrea was supposed to sell the idea of the Analytical Engine, a machine designed by Charles Babbage but not yet constructed. Money needed to be raised for this endeavour. Menabrea explains what the machine can do. To some extent he does try also to sell the idea, but his writing can be quite dry: 'To give an idea of this rapidity, we need only mention that Mr. Babbage believes he can, by his engine, form the product of two numbers, each containing twenty figures, in three minutes.'

Lovelace wanted to add more, and her writing is glowing with excitement about the machine. She added 7 detailed discussion notes, labelled A to G, and also 20 numbered footnotes. In the numbered footnotes she comments on issues with the translation. She also comments sometimes on how she feels that Menabrea hasn't quite understood completely the novelty and potential (for example 'M. Menabrea's opportunities were by no means such as could be adequate to afford him information on a point like this'). In the notes labelled A to G she explains many of the concepts that are the fundamentals of computing today.

Note A

This note spells out that the Analytical Engine is a general purpose computer. It doesn't just calculate fixed results, it analyses. It can be programmed. It's flexible. It links 'the operations of matter and the abstract mental processes of the most abstract branch of mathematical science'. This is not just a Difference Engine, but instead offers far more. She is very clear that 'the Analytical Engine does not occupy common ground with mere "calculating machines". It holds a position wholly its own'. It's also not just for calculations on numbers, but could operate on other values too. It might for instance compose elaborate pieces of music. 'The Analytical Engine is an embodying of the science of operations'. Lovelace has a beautiful turn of phrase that evokes her delight in imagining where this could go: it 'weaves algebraic patterns just as the Jacquard loom weaves flowers and leaves'.Note B

This note is about memory, the 'storehouse' of the Engine. It is about variables, and retaining values in memory. She describes how the machine can retain separately and permanently any intermediate results, and then supply these intermediate results as inputs to further calculations. Any particular function that is to be calculated is described by its variables and its operators, and this gives the machine its generality.Note C

This note is about reuse: the Engine uses input cards, as used in the Jacquard looms. Lovelace had toured the factories of the north with her mother and seen the Jacquard looms in action. They would have been the cutting edge of technology at the time. Intricate patterns were woven by machines, with thousands of fine threads, all controlled by punched cards. The cards needed to be created by card punching machines, with detailed tables of numbers that would be translated into the patterns of holes. The cards allowed a design to be repeated, and they were bound together in a sequence. She explains that these input cards, can be reused 'any number of times successively in the solution of one problem'. And furthermore, that this applies to just one card, or a whole set of cards. The train of cards can be rewound until they are in the correct position to be used again. |

| Jacquard loom, Nottingham Industrial Museum |

|

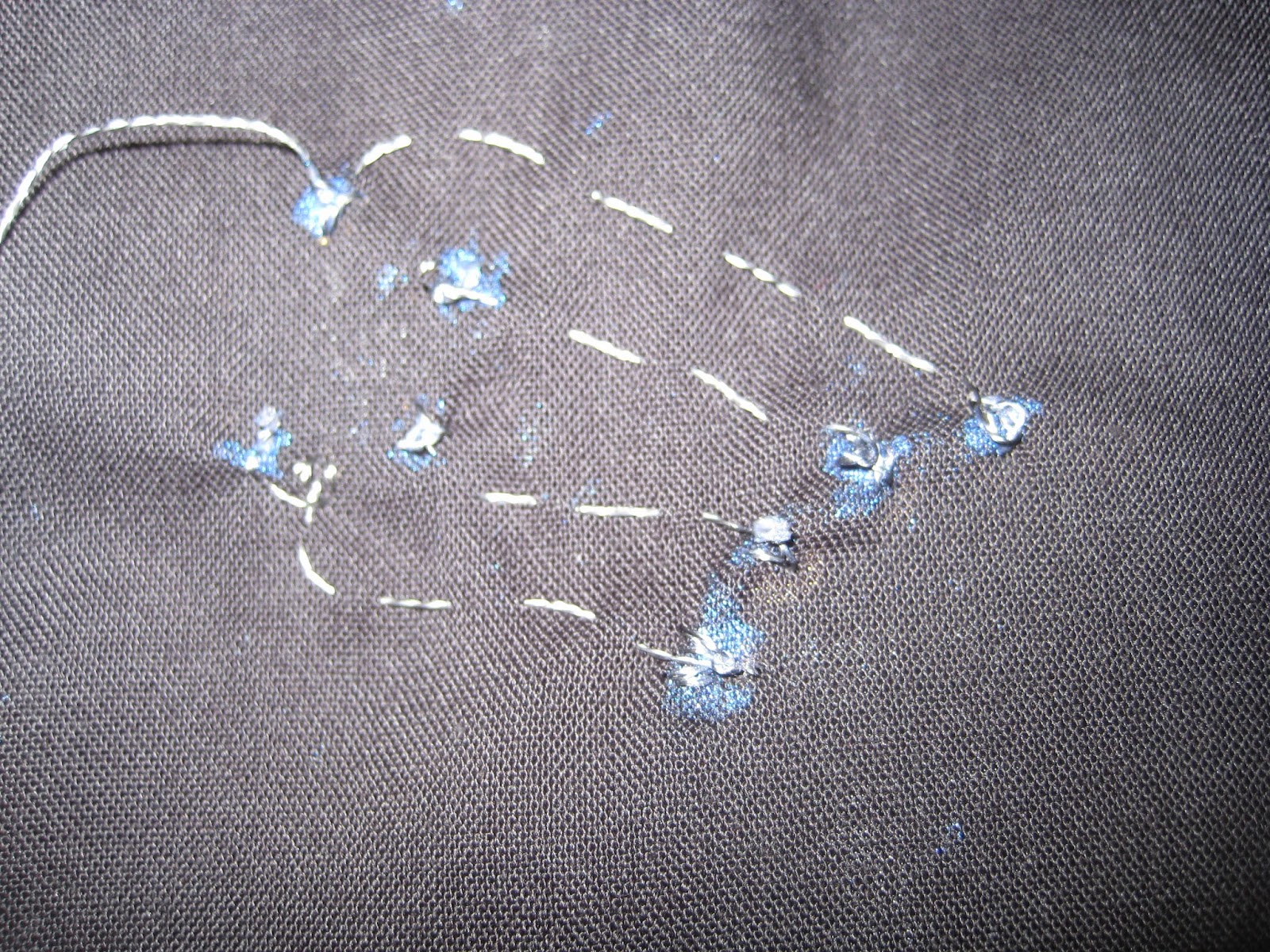

| Punched cards used in Jacquard loom, Nottingham Industrial Museum |

Note D

This note is about the order and efficiency of operations. She explains that there are input variables, intermediate variables, and final results. Every operation must have two variables 'brought into action' as inputs and one variable to use as the result. We can then trace back and inspect a calculation to see how a result was obtained. We can see how often a value was used. We can think about how to batch up operations to make them more efficient. She begins to imagine not just how the machine will operate, but how programmers will have to think carefully about their programs, how they will debug them and how they will optimise the algorithms they implement.Note E

In this note Lovelace explains how loops or 'cycles' can be used to solve series, for example to sum trigonometrical series. With a worked example (used in astronomical calculations), she abstracts out the operations needed to produce terms in the series and works on a notation for expressing cycles that include cycles. Note 18 expands further to explain that one loop can follow another, and there may be infinitely many loops.Note F

This note begins with the statement 'There is in existence a beautiful woven portrait of Jacquard, in the fabrication of which 24,000 cards were required.' Babbage was so taken with this woven portrait that he bought one of the few copies produced. The amount of work that machines could do, unfailingly, without tiring, was changing the face of industry. The industrial revolution was sweeping through the country. Lovelace explains how the Engine will be able to solve long and intensive with a minimum of cards (they can be rewound, and used in cycles). The machine can calculate a long series of results without making mistakes, solving problems 'which human brains find it difficult or impossible to work out unerringly'. It might even be set to work to solve as yet unsolved and arbitrary problems. 'We might even invent laws for series or formulae in an arbitrary manner, and set the engine to work upon them and thus deduce numerical results which we might not otherwise have thought of obtaining; but this would hardly perhaps in any instance be productive of any great practical utility, or calculated to rank higher than as a philosophical amusement.'Note G

She concludes with the scope and limitations of the Analytical Engine. She is keen to stress the machine's limitations and about using the machine for discovery: it doesn't create anything. It has 'no pretensions to originate anything'. Lovelace then enumerates what it can do, but warns against overhyping it. Alan Turing references her concerns in his 1950 paper Computing Machinery and Intelligence (which is also well worth a read). In this paper he discusses 9 objections that people may raise against the possibility of AI. One of these 9 objections is Lady Lovelace's Objection. He writes:Our most detailed information of Babbage's Analytical Engine comes from a memoir by Lady Lovelace (1842). In it she states, "The Analytical Engine has no pretensions to originate anything. It can do whatever we know how to order it to perform" (her italics).In Note G we also get the detailed trace of a substantial program to calculate the Bernoulli numbers, showing the order of operations combining variables to make results. The trace shows looping and storage, showing intermediate results, and the correspondence with the mathematical formulae being calculated at each step. She inspects the program for efficiency, working out the number of punched cards required, the number of variables needed and the numbers of execution steps.

Lovelace wonders, in the third paragraph of this Note, whether our minds are able to follow the analysis of the execution of the machine. Will we really be able to understand computers and their abilities? 'No reply, entirely satisfactory to all minds, can be given to this query, excepting the actual existence of the engine, and actual experience of its practical results.'

|

| Ada Lovelace is buried in Hucknall Church, Nottingham. She died aged 36, and never got to see the Analytical Engine constructed. |